Can you get too much of a good thing?

When it comes to vitamin A, zinc and niacin, yes you can.

We need enough of these nutrients for good health, but consuming too much can be harmful – especially to young children, the elderly and pregnant women. Because of flawed government policies and food producers who fortify foods with extra nutrients in the hope of boosting sales, many American children today are getting excessive amounts of certain nutrients.

Find out more in EWG’s latest investigative report, “How Much is Too Much?”

Want to learn more?

Learn more:

Read the full report for more information about: the harmful effects of excess vitamins and minerals; what amounts EWG considers safe detailed lists of cereals and snack bars containing nutrient levels high enough to warrant caution for young children and/or pregnant women

Executive Summary

Getting sufficient amounts of key nutrients is important for a healthy diet, but many Americans don’t realize that consuming excessive amounts of some nutrients can be harmful. Food producers often fortify foods with large amounts of vitamins and minerals to make their products appear more nutritious so they will sell better. Because the Food and Drug Administration’s current dietary Daily Values for most vitamins and minerals were set in 1968 and are woefully outdated, some products may contain fortified nutrients in amounts much greater than the levels deemed safe by the Institute of Medicine, a branch of the National Academy of Sciences.

EWG’s review of fortified foods currently on the market found that young children are at risk of consuming too much of three nutrients – vitamin A, zinc and niacin. Fortified breakfast cereals are the number one source of excessive intake because all three nutrients are added to fortified foods in amounts calculated for adults, not children. The FDA’s current Daily Values for these three nutrients are actually higher than the “Tolerable Upper Intake Level” calculated by the Institute of Medicine for children 8 and younger. Pregnant women and older adults may also be at risk of consuming too much vitamin A from other fortified foods, such as snack bars.

Nutrient content claims are used as marketing tools. High fortification levels in a product can induce consumers to buy certain foods because they seem “healthier” even though they might not be, as the Institute of Medicine has pointed out in multiple reports (IOM 1990; IOM 2010).

Vitamin A, zinc, and niacin are all necessary for health, but too high doses can cause toxic symptoms. Routinely ingesting too much vitamin A from foods such as liver or supplements can over time lead to liver damage, skeletal abnormalities, peeling skin, brittle nails and hair loss. These effects can be short-term or long lasting. In older adults, high vitamin A intake has been linked to hip fractures. Taking too much vitamin A during pregnancy can result in developmental abnormalities in the fetus. High zinc intakes can impair copper absorption and negatively affect red and white blood cells and immune function. Niacin is less toxic than vitamin A and zinc, but consuming too much can cause short-term symptoms such as rash, nausea and vomiting.

Although many Americans do not eat enough vitamin-rich vegetables, fruits and other fresh products and consequently get too little of some vitamins and minerals, ingesting excessive amounts is also unhealthy. Data from the National Health and Nutrition Examination Survey (NHANES) show that with the exception of vitamins D and E and calcium, dietary deficiencies of vitamins and minerals are rare among children 8 and younger in the United States (Berner 2014). For young children, the problem is the opposite – the risk of too much intake of some nutrients from fortified foods and supplements (Bailey 2012a; Butte 2010).

One recent study by a joint research team of the National Institutes of Health and California Polytechnic State University found that children younger than 8 are at risk of consuming vitamin A, zinc and niacin at levels above the Institute of Medicine’s Tolerable Upper Intake Level. The study found that from food alone, including naturally occurring and fortified sources, 45 percent of 2-to-8-year-old children consume too much zinc, 13 percent get too much vitamin A and 8 percent consume too much niacin (Berner 2014). Similar results have been published by the FDA itself (FDA 2014a) and by a research group at the University of Toronto (Sacco 2013). In contrast, eating fresh foods that naturally contain vitamins and minerals has significant health benefits and, with very few exceptions, has not been linked to excessive vitamin and mineral intake.

It is difficult or impossible to link these nutrient overexposures to specific cases of harm to children’s health, but multiple studies point out that cumulative exposures from fortified food and supplements could put children at risk for potential adverse effects (IOM 2003; IOM 2005). Multiple expert reviews conducted in the United States and in Europe have highlighted the health risks of high vitamin and mineral fortification of foods (BfR 2005; BfR 2006; EFSA 2006; IOM 2001; UK EVM 2003).

EWG analyzed the data on Nutrition Facts labels for breakfast cereals and snack bars, two food categories that are frequently fortified and heavily marketed for children. EWG’s analysis was based on data gathered by FoodEssentials, a company that compiles information on foods sold in American supermarkets. EWG reviewed 1,556 breakfast cereals and 1,025 snack bars, identifying 114 cereals fortified with 30 percent or more of the adult Daily Value for vitamin A, zinc and/or niacin and 27 snack bars fortified with 50 percent or more of the adult Daily Value for at least one of these nutrients.

A number of factors make children’s excessive intake of vitamin A, zinc and niacin a health concern:

• These micronutrients are present naturally in food and are also added to many foods children and toddlers eat, including milk, meat, enriched bread and snacks.

• Many cereals and snack bars are fortified at levels that the FDA considers high, exceeding the amounts children need and in some cases exceeding the safe upper limits for young children in a single serving.

• Intentional or accidental fortification “overages” by manufacturers can make actual exposures greater than the amounts indicated on the nutrition label.

• Many children eat more than a single serving at a sitting because the serving sizes listed on many packaged foods do not reflect the larger amounts people actually eat.

• A third of all children, and as many as 45 percent of the younger age groups, take dietary supplements (Bailey 2013).

Excessive exposure to fortified nutrients is the result of unscrupulous marketing, flawed nutrition labeling and outdated fortification policy. The current nutrition labeling system puts children’s health at risk and is in dire need of reform. The FDA’s recently proposed reforms (FDA 2014b; FDA 2014c) are a step in the right direction, but they remain insufficient to protect children’s health from over-consumption of fortified micronutrients.

To address these problems, the FDA must set percent Daily Value levels that reflect current science; require nutrition labels on products marketed for children to display percent Daily Values specific to each age group, such as 1-to-3-year-olds and 4-to-8-year-olds; and update the serving sizes cited on Nutrition Facts labels to accurately reflect the larger amounts that Americans actually eat. The FDA should also modernize its 1980 guidelines on voluntary food supplementation, particularly for products that children eight years old and younger may eat. Food fortification policy must be based on specific risk assessments for each nutrient and for specific population groups.

EWG recommends that parents give their children products with no more than 20-to-25 percent of the adult Daily Value for vitamin A, zinc and niacin and monitor their children’s intake of these and other foods so kids do not get too much of these nutrients. Because too much vitamin A can cause birth defects, EWG also recommends that pregnant women watch their intake of products fortified with vitamin A, especially if they are taking a vitamin pill. Older adults should also carefully monitor vitamin A in their diets and supplements in order to avoid the risks of osteoporosis and hip fractures associated with high vitamin A intake.

Finally, it is critical that the FDA take seriously the question of how food manufacturers may misuse food fortification guidelines and nutrient content claims to sell more products, particularly those of little nutritional value.

Up to Half of Young Children Get Too Much Vitamin A, Zinc and Niacin

For vitamins and minerals, there are health risks to consuming too much as well as too little. Adequate amounts are essential to maintain health and prevent disease, and historically deficiencies of essential vitamins have caused diseases such as scurvy, pellagra and rickets. In developed countries, however, economic advances over the last century have significantly improved diets, resulting in a better dietary supply of many nutrients.

Paradoxically, however, widespread use of dietary supplements and extensive mandatory and voluntary fortification of foods with vitamins and minerals have created the opposite danger – excessive intake. It’s still important for everyone to get an adequate supply of micronutrients, but it is also vital to make sure people don’t get too much of certain vitamins and minerals, because overconsumption can also cause health problems (BfR 2005; BfR 2006; Dwyer 2014; IOM 2003).

Between 1997 and 2001, the Institute of Medicine calculated what it calls Tolerable Upper Intake Levels (UL) for various nutrients, which it defined as “the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects to almost all individuals in the general population.” As intake increases above these upper levels, the risk of harmful effects increases (IOM 1998a). Tolerable Upper Intake Levels were calculated for specific age groups because both the daily needs and the tolerance of excess nutrients vary depending on body size and specific nutritional requirements (Verkaik-Kloosterman 2012; IOM 1998a). The European Union and the United Kingdom conducted their own assessments of the safe upper amount of vitamin and mineral intake and established upper limits or guidance levels that are similar overall to those of the Institute of Medicine (EFSA 2006; UK EVM 2003).

According to a recently published analysis of dietary intake data from the National Health and Nutrition Examination Survey 2003-2006, from food alone 8 percent of 2-to-8-year-old children consume too much niacin, 13 percent consume too much vitamin A and 45 percent consume too much zinc (Berner 2014). This analysis included both naturally occurring and fortified micronutrients in food. Potential over-exposures are much higher among the 42 percent of children in this age group whose parents give them dietary supplements (Bailey 2012a). These supplements vary greatly and can include multivitamins, multivitamins with minerals or individual vitamins and mineral preparations (Bailey 2013). Many supplements, such as gummy vitamins, have been developed to make them more palatable to children.

Taking into account both supplement users and non-users, EWG calculated that more than 10 million American children are getting too much vitamin A; more than 13 million get too much zinc; and nearly 5 million get too much niacin (Table 1).

Table 1: Many 2-to-8-year-old children are at risk of excessive nutrient intake

|

Fortified nutrient |

Health effects associated with excessive intake |

Percentage of 2-to-8-year-old children exceeding IOM’s tolerable intake level * |

Estimated number of 2-to-8-year-olds over-exposed from food and supplements ** |

|

|---|---|---|---|---|

|

Children who don’t take supplements |

Children who take supplements |

|||

|

Vitamin A |

Liver damage; brittle nails; hair loss; skeletal abnormalities; osteoporosis and hip fracture (in older adults); developmental abnormalities (of the fetus) |

13% |

72% |

10.6 million |

|

Zinc |

Impaired copper absorption; anemia; changes in red and white blood cells; impaired immune function |

45% |

53-84% |

13.5-17.2 million |

|

Niacin (B3) |

Skin reactions (flushing, rash); nausea; liver toxicity |

8% |

28% |

4.7 million |

* Data from Bailey 2012a and Berner 2014. Bailey reported that 84 percent of supplement users exceed the UL for zinc; Berner reported that 53 percent of supplement users exceed the UL for zinc.

** 42 percent of 2-to-8-year-olds take supplements (Bailey 2012a). The number of children in the 2-to-8 age group is 28 million, according to the 2010 Census data (4 million children per age year). For the 58 percent who do not use supplements, the overexposed population is based on food alone (third column). For the 42 percent who do take supplements, overexposed population based on both food and supplements (fourth column). The two numbers were added to estimate the total number overexposed from both sources.

The data in Table 1 agree with the FDA’s analysis that 33 percent of 4-to-8-year-old children ingest zinc in excess of the IOM’s Tolerable Upper Intake Level and 26 percent are over-exposed to vitamin A (FDA 2014a). The FDA did not analyze niacin intake (FDA 2014a).

Routinely ingesting too much vitamin A over a long time — from foods such as liver, fortified foods or supplements — can lead to liver damage, skeletal abnormalities, peeling skin, brittle nails and hair loss. These effects can be short-term or long lasting. In older adults, high vitamin A intake has been linked to hip fractures. Taking too much vitamin A during pregnancy can result in developmental abnormalities in the fetus. High zinc intakes can impair copper absorption and negatively affect red and white blood cells and immune function. Niacin is less toxic than vitamin A and zinc, but excessive niacin intake can cause short-term symptoms such as rash, nausea and vomiting.

"Added nutrients are about marketing, not health."

– Marion Nestle, author and professor of nutrition, food studies and public health, New York University 2013]

In a 2005 report for the federal Women, Infants and Children (WIC) nutrition program, the Institute of Medicine warned that excessive exposure to dietary vitamin A and zinc posed a risk to formula-fed infants and 1-to-4-year-olds. In a more recent study, researchers at the Baylor College of Medicine, the NIH Office of Dietary Supplements and Nestlé Infant Nutrition reported that when all sources of nutrition were combined, 59 percent of 2-to-4-year-old children exceeded the Upper Intake Level for vitamin A and 72 percent exceeded the limit for zinc (Butte 2010).

What Major Studies Have Concluded

"More than 7 percent of children (2–8 y) usually have nutrient intakes from foods alone that exceed the UL [Tolerable Upper Intake Level] for copper, selenium, folic acid, vitamin A and zinc, with zinc being the most notable (45 percent of [supplement] non-users and 84 percent of [supplement] users reporting usual intakes > UL)" (Bailey 2012a).

"Supplement use did increase the prevalence of intakes above the UL for iron, zinc, vitamin A and folic acid in all age groups and for vitamin C, copper, and selenium in those 2–8 y." (Bailey 2012a).

"Intakes of synthetic folate, preformed vitamin A*, zinc, and sodium exceeded Tolerable Upper Intake Level in a significant proportion of toddlers and preschoolers" (Butte 2010).

"High proportions of formula-fed WIC infants and WIC children ages 1 through 4 years had estimated usual intakes of zinc and preformed vitamin A that exceeded the UL" (Institute of Medicine 2005).

* Preformed vitamin A refers to retinol, retinyl palmitate, or retinyl acetate, which are readily converted in the body into retinoic acid, the active form of vitamin A. The activity of preformed vitamin A is 12-to-24-times higher than that of of carotenoids such as betacarotene, which are vitamin A precursors.

Overconsumption of these nutrients is less of a concern for older children because their greater body weight raises both their nutritional needs and tolerable upper intake levels. However, even 9-to-13-year-olds who take daily multivitamins and consume fortified foods may ingest too much zinc and vitamin A. About 32 percent of 9-to-13-year-olds that take supplements exceed the Institute of Medicine’s upper limit for zinc, and 21 percent exceed the limit for vitamin A (Bailey 2012a). Niacin intake from all sources exceeds the upper limit in 11 percent of 9-to13-year-old children (Berner 2014).

There are two major reasons for children’s excessive consumption of fortified nutrients in food: industry marketing and the FDA’s outdated system of nutrition labeling.

As EWG detailed in its May 2014 report, “Children’s Cereals: Sugar By the Pound,” research has shown that nutrition claims on packaging influences how consumers perceive the overall healthfulness of foods (Drewnowski 2010). One recent study of parents of school-age children found that half were more willing to buy cereals that carried a nutrition claim even though the products were of below average nutritional quality (Harris 2011a). The authors wrote that the nutrition- related claims “have the potential to mislead a significant portion of consumers” (Harris 2011a).

Other experts on food labeling have come to the same conclusion. Jennifer Pomeranz, a professor of law and public health at Temple University, wrote last year in the American Journal of Law and Medicine that, “Perhaps the most problematic result of these lax regulations is that products high in added sugar carry a wide variety of nutrient content claims, which misleadingly convey healthfulness in an otherwise unhealthy product” (Pomeranz 2013).

Dr. Marion Nestle, the renowned author and professor of nutrition at New York University, summed it up best in an August 2013 blog post titled, “FDA study: Do added nutrients sell products? (Of course they do).” She wrote:

“Food marketers know perfectly well that nutrients sell food products. The whole point of doing so is to be able to make nutrient-content claims on package labels… Plenty of research demonstrates that nutrients sell food products. Any health or health-like claim on a food product – vitamins added, no trans fats, organic – makes people believe that the product has fewer calories and is a health food… As I keep saying, added vitamins are about marketing, not health.”

(Nestle 2013)

The Food and Drug Administration’s system of Nutrition Facts labels would be one way to counter industry’s misleading marketing. Instead the labels compound the problem, because the labels’ percent Daily Values for nutrition content are outdated and are based only on adult dietary needs. Many of the FDA Daily Value levels were set in 1968 — more than 40 years ago — when nutritional deficiencies were still the primary concern. And the FDA does not require age-appropriate Daily Value labeling on products specifically targeted at children.

When a consumer picks up a box of cereal covered in cartoon characters that is clearly marketed to children and sees that one serving provides 50 percent of the Daily Value for vitamin A, she may think that it provides 50 percent of a child’s recommended intake. She would most likely be wrong. If the label has the signature small-print phrase, “Percent Daily Values are based on a 2,000 calorie diet,” that means that the nutrition label is based on the adult Daily Values.

Moreover, for vitamin A, zinc and niacin, the numbers described by FDA as “Daily Value for adults and children 4 or more years of age” actually exceed the Tolerable Upper Intake Levels for children 8 and younger (Table 2).

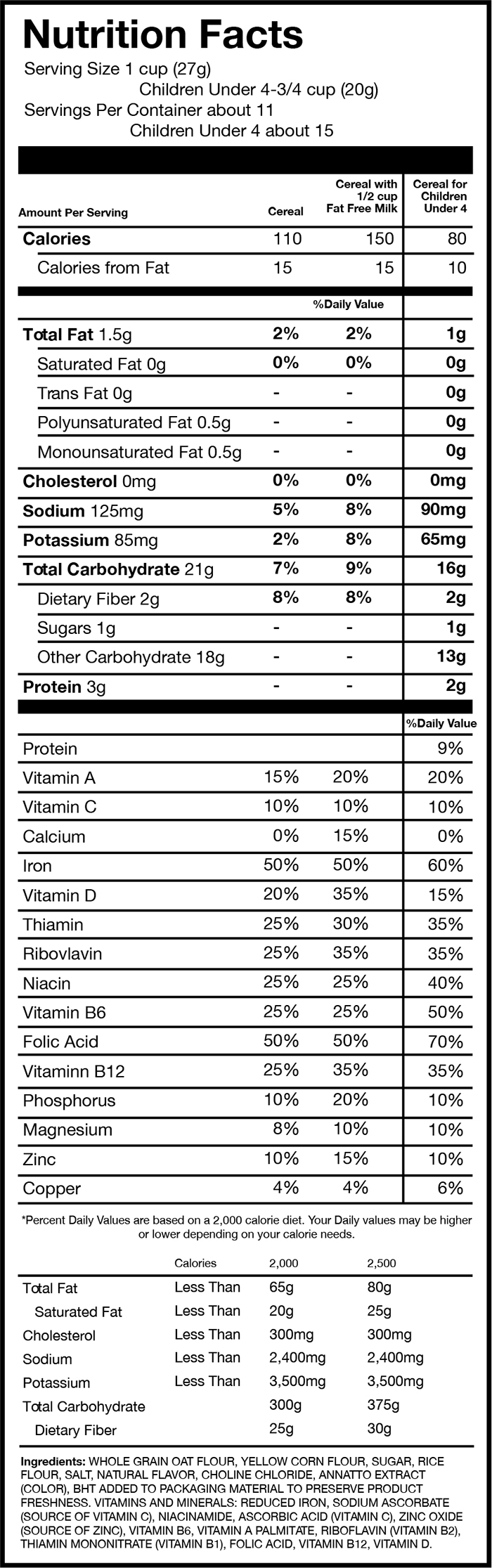

While a few products marketed for toddlers and young children do voluntarily list Daily Values specific for this age group, the FDA does not require such labeling. EWG found two brand-name products currently on the market that have labels for both adults and children younger than 4 — Post C is for Cereal and General Mills’ Cheerios (original formulation). The vast majority of cereals — including most children’s cereals — display just one set of Daily Values, those for adults.

Table 2: Nutrition Label Daily Values for vitamin A, zinc and niacin exceed Tolerable Upper Intake Levels for children 8 and younger

|

Fortified nutrient |

Tolerable Upper Intake Level for 1-to-3-year-old children |

Tolerable Upper Intake Level for 4-to-8-year-old children |

Current FDA Daily Value for adults and children 4-years-old and up |

|---|---|---|---|

|

Vitamin A* |

600 m RAE/day |

900 m RAE/day |

5,000 IU (1,500 m RAE) |

|

Zinc |

7 mg/day |

12 mg/day |

15 mg/day |

|

Niacin |

10 mg/day |

15 mg/day |

20 mg/day |

* RAE (retinol activity equivalents) is the measure used today for expressing vitamin A activity. Health concerns apply only to preformed vitamin A, such as retinyl palmitate or retinol, not to naturally occurring vitamin A precursors such as beta-carotene and other carotenoids. The current FDA Daily Value for vitamin A is expressed in the outdated form of international units (IU). 5,000 IU correspond to 1,500 mg (micrograms) of retinol activity equivalents (RAE).

As a result of the excessively high Daily Values used for nutrition labeling and the FDA’s lax, unenforceable and outdated food fortification policy, millions of children are ingesting potentially unhealthy amounts of vitamins and minerals from fortified foods.

Sources of Added Vitamins and Minerals in Children’s Food

Vitamin A, zinc and niacin all occur naturally. They are also added to foods that young children eat regularly

Table 3: Sources of added vitamin A, zinc and niacin in the diets of 2-to-8-year-old children

|

Food |

Percent contribution |

|---|---|

|

Preformed Vitamin A |

|

|

Ready-to-eat cereal |

42.6% |

|

Milk |

25.1% |

|

Milk drinks |

11.7% |

|

Pasta dishes |

3.5% |

|

Margarine, butter |

3.1% |

|

Niacin |

|

|

Ready-to-eat cereal |

51.6% |

|

Yeast bread, rolls |

10.4% |

|

Pizza |

6.9% |

|

Pasta dishes |

5.1% |

|

Cakes and cookies |

4.8% |

|

Zinc |

|

|

Ready-to-eat cereal |

96.8% |

Source: National Health and Nutrition Examination Survey 2003-2006, Berner 2014

Vitamin A is the common name for a group of fat-soluble substances present in two forms in food: preformed vitamin A (retinol and retinyl esters such as retinyl palmitate and retinyl acetate) and vitamin A precursor carotenoids (beta-carotene, alpha-carotene, and many others) that the body converts into retinol or retinoic acid. Vitamin A precursors are also called provitamin A. Different chemical forms of vitamin A play specific roles in the body. Retinol is important for eyesight. Retinoic acid supports normal body growth and development and plays a critical role in the formation and maintenance of organs and tissues. Retinoic acid is also a key regulator of numerous growth and metabolic processes.

Preformed vitamin A such as retinyl palmitate is rapidly converted first to retinol and then to retinoic acid. Excessive production of retinoic acid caused by ingesting large amounts of preformed vitamin A can disrupt bodily functions and cause overt toxicity.

In contrast, ingesting large amounts of naturally occurring dietary provitamin A carotenoids is not associated with toxicity. That’s because it takes multiple metabolic reactions to convert provitamin A precursors into retinol, generating relatively small amounts of both retinol and retinoic acid. Retinol has 12 times the activity of beta-carotene and 24 times the activity of alpha-carotene.

Preformed vitamin A occurs naturally in dairy products, eggs, fish and meat, especially liver. Carotenoids are found in bright yellow and orange vegetables such as carrots, pumpkin and sweet potatoes, broccoli and spinach. Preformed vitamin A, either as retinyl palmitate (most common) or retinyl acetate, is frequently added to milk and milk-based drinks, butter spreads, cereals, snack bars, cookies and cakes.

The FDA has rules regarding vitamin A fortification of butter spreads (margarine) and milk. Butter spreads typically contain 10 percent of the adult Daily Value per serving. Fortified milk can contain 10-15 percent of the adult Daily Value per serving. There are no limits on vitamin A fortification of other foods.

Niacin, or vitamin B3, encompasses nicotinic acid, niacinamide (also called nicotinamide or nicotinic acid amide) and their derivatives. Niacin occurs naturally in foods such as meat, fish, sunflower seeds and peanuts, as well as in whole grains such as whole wheat and brown rice. The liver can also synthesize niacin from the amino acid tryptophan, a building block for proteins.

The Institute of Medicine concluded in 1998 that niacin intake in the United States is “generous” (IOM 1998). Nevertheless, food producers enrich a variety of foods with additional niacin (often in the form of niacinamide), including cereals, breads and flour-based dishes such as pizzas and cakes. While there is no evidence of harm from naturally occurring niacin, excess niacin in fortified foods or supplements has been linked to harmful effects.

The FDA requires the addition of certain micronutrients to processed grain foods such as breads, corn meal, flour, macaroni and rice to compensate for the loss of these nutrients during flour bleaching and processing. The typical micronutrients added to enrich cereal grains are thiamin, riboflavin, niacin, iron and folic acid (Yamini 2012). For niacin, the legally required enrichment amount is 15-34 mg/pound, which provides 8-15 percent of the adult Daily Value per serving, depending on the food. Breakfast cereals are often fortified to much higher levels, up to 100 percent of the adult Daily Value.

Zinc is an essential mineral. It is naturally present in many foods, including milk and meat products as well as grain-based products. It is a component of dietary supplements as well as cold lozenges and some over-the-counter cold remedies. Breakfast cereals are the number one source of added zinc in the diets of children. The FDA does not limit the amount of zinc added to fortified foods.

EWG Identified 23 Excessively Fortified Cereals

Because the FDA’s current Daily Values for vitamin A, zinc and niacin are so out of sync with what the Institute of Medicine considers healthy for children, a single serving of some fortified foods can overexpose them to one or more of these nutrients.

Fortified breakfast cereals are the number one source of added vitamin A, zinc and niacin in children’s diets (Table 3). EWG analyzed the Nutrition Facts panels of 1,556 cereals and identified 23 with the highest added doses. A child age 8 or younger eating a single serving of any them would exceed IOM’s safe level. The 23 cereals with the highest added nutrient levels include such popular and well-known national brands as Kellogg’s and General Mills and store brands such as Food Lion, Safeway and Stop & Shop (Table 4).

Table 4: A single serving of these 23 cereals would exceed IOM’s safe limit of one or more nutrients for children 8 and younger*

|

Breakfast cereals, in alphabetical order |

One serving would overexpose children to: |

||

|---|---|---|---|

|

Zinc |

Niacin |

Vitamin A |

|

|

Essential Everyday Bran Flakes Cereal |

✔ |

✔ |

|

|

Food Club Essential Choice Bran Flakes |

✔ |

✔ |

|

|

Food Lion Enriched Bran Flakes Cereal |

✔ |

✔ |

|

|

Food Lion Whole Grain 100 Cereal |

✔ |

✔ |

|

|

General Mills Total + Omega-3 Honey Almond Flax |

✔ |

✔ |

|

|

General Mills Total Raisin Bran |

✔ |

✔ |

|

|

General Mills Total Whole Grain |

✔ |

✔ |

|

|

General Mills Wheaties Fuel |

|

✔ |

|

|

Giant Eagle Bran Flakes |

✔ |

✔ |

|

|

Great Value Multi Grain Flakes |

✔ |

✔ |

|

|

Kashi U 7 Whole Grain Flakes & Granola with Black Currants & Walnuts |

|

|

✔** |

|

Kellogg's All-Bran Complete, Wheat Flakes |

✔ |

✔ |

|

|

Kellogg's Cocoa Krispies (single 2.3 oz serving in a plastic container) |

|

|

✔** |

|

Kellogg's Krave, Chocolate (single 1.87 oz serving in a plastic container) |

|

|

✔ |

|

Kellogg's Product 19 |

✔ |

✔ |

|

|

Kellogg's Smart Start, Original Antioxidants, Antioxidant Vitamins A, C & E, Including Beta Carotene |

✔ |

✔ |

|

|

Kemach Whole Wheat Flakes Cereal |

✔ |

✔ |

|

|

Kiggins Bran Flakes |

✔ |

✔ |

|

|

Roundy's Bran Flakes |

✔ |

✔ |

|

|

Safeway Kitchens Bran Flakes |

✔ |

✔ |

|

|

Shop Rite Bran Flakes |

✔ |

✔ |

|

|

Shur Fine Wheat Bran |

✔ |

✔ |

|

|

Stop&Shop Source 100 |

✔ |

✔ |

|

* See Table A1 in Appendix A for details on the Daily Value levels for each cereal.

** When eaten with milk, these cereals contain 50 percent of the Daily Value for vitamin A per serving. Vitamin A-fortified milk can contain 10-15 percent of the adult Daily Value, corresponding to 150-225 mg RAE (Retinol Activity Equivalents). Eaten with one cup of milk containing 10 percent of the adult Daily Value, these cereals provide 900 mg RAE, reaching the 900 mg/d RAE Tolerable Upper Intake Level (UL) for 4-to-8-year-old children.

EWG’s analysis was performed on data gathered by FoodEssentials, a company that compiles information about foods sold in American supermarkets. EWG also reviewed manufacturers’ websites for data confirmation and to collect additional nutrition information. Cereal package label information was gathered between Sept. 15, 2012 and March 13, 2014 and represents a snapshot of the market over that period. Overall, 1,334 labels dated from 2013, 205 dated from 2014 and 17 dated from 2012.

The cereals in Table 4 are not the only sources of concern. In all, 114 cereals contain 30 percent or more of the adult Daily Value (DV) of either vitamin A, zinc or niacin in a single serving (summary statistics in Table 5). Some cereals contained two of the three nutrients at 30 percent or more of the adult Daily Value (25 with zinc and niacin; 10 with vitamin A and niacin; 1 with zinc and vitamin A). Children who eat cereals that are high in one or more of these three nutrients along with other fortified foods and/or supplements could easily be overexposed.

Table 5: Fortification levels for cold and hot cereals

|

Fortified nutrient* |

Type of cereal (of 1,556 total)** |

|||

|---|---|---|---|---|

|

Cold |

Granolas |

Instant hot |

Non-instant hot |

|

|

Vitamin A |

8 (0.9 %) |

0 |

7 (2.6%) |

1 (0.4%) |

|

Vitamin A |

176 (20%) |

2 (1%) |

152 (67%) |

4 (1.5%) |

|

Zinc |

29 (3%) |

0 |

0 |

0 |

|

Zinc |

266 (31%) |

10 (5%) |

1 (0.4%) |

0 |

|

Niacin |

100 (12%) |

3 (1.5%) |

3 (1.3%) |

1 (0.4%) |

|

Niacin |

483 (56%) |

15 (7.4%) |

145 (63%) |

9 (3.4%) |

* The FDA considers fortification at 20 percent or more of the Daily Value “high” (FDA 2004).

** Four of the products are baby cereals, not included in the table.

EWG’s analysis shows that on average, cold cereals are the most likely to be fortified and have the highest levels of fortification, followed by instant hot cereals. Of the 114 cereals that provide 30 percent or higher of the adult Daily Value for vitamin A, zinc or niacin in a single serving, only one is a (non-instant) hot cereal, three are granolas, seven are instant hot cereals and 103 are cold cereals.

American children and adults often eat more than a single serving of cereal daily because many manufacturers list unrealistically small serving sizes on the Nutrition Facts label. Many cereals list a serving size of 30 grams, corresponding to ¾ cup or 1 cup, but both food industry and academic studies have found that many children eat much larger amounts in a single sitting. A study by General Mills found that 6-to-18-year-old children and adolescents eat about twice as much in a meal – an average of 42-to-62 grams (Albertson 2011). A study by Yale University’s Rudd Center for Food Policy and Obesity found that 5-to-12-year-old children ate an average of 35 grams of low-sugar cereals and an average of 61 grams of high-sugar cereals (Harris 2011b).

The FDA’s analysis of food intake data from the National Health and Nutrition Examination Survey (NHANES) 2003-2008 found that at least 10 percent of Americans eat 2-to-2.6 times the labeled serving size at a sitting (FDA 2014c).

For cereals fortified to 30 percent or more of the adult Daily Value for vitamin A, zinc, or niacin, 2½ servings would exceed or come close to exceeding the Institute of Medicine’s safe daily levels for a child 8 or younger, even if the child got none from other sources. EWG found that 114 cereals, 7 percent of the 1,556 analyzed, were fortified at 30 percent or more of the adult Daily Value per serving for at least one of three nutrients. (See Appendix A for full list.)

The fact that many children take vitamin pills every day complicates the situation even more. According to a recent study by the National Institutes of Health’s Office of Dietary Supplements and the Centers for Disease Control and Prevention, 45 percent of 2-to-5-year-old children and 36 percent of 6-to-11-year-olds take supplements (Bailey 2013).

A child who takes a daily dietary multivitamin and drinks milk gets sufficient Vitamin A from these sources alone. Eating any amount of vitamin A-supplemented cereal would exceed the Institute of Medicine’s recommended limit. Zinc and niacin are also common in many dietary supplements. As with vitamin A, children who take these supplements could easily exceed the safe limits if they also eat fortified cereals.

Even with children who do not take supplements, parents should exercise caution to avoid excessive consumption of these nutrients. A single serving of any food with 20 percent of the adult Daily Value per serving provides a complete or nearly complete recommended dietary allowance for vitamin A, zinc and niacin for 1-to-3-year-old children. A serving with 20 percent of the adult Daily Value provides 50-75 percent of the recommended allowance for 4-to-8-year-old children (Table 7). Eating multiple servings of different foods fortified at more than 20-to-25 percent of the adult Daily Value can put children 8 and younger at risk of exceeding IOM’s tolerable limits.

Snack Bars: Another Source of Added Nutrients

American children and adults alike enjoy snack bars, which are often advertised as “health” or “quick burst of energy” foods. Many parents put a snack bar in their child’s lunch box or sports bag for an afternoon nibble. Sports and outdoor enthusiasts often take along energy and nutrition bars for long trips and endurance challenges. Even office workers may have a snack bar close at hand for a late-night work crunch or a mid-morning snack.

EWG’s analysis revealed that snack and energy bars are a surprising source of added nutrients that can be excessive for children younger than 8, for pregnant women and for older adults, particularly post-menopausal women. Reviewing 1,025 snack and energy bars, EWG found 27 that contained 50 percent or more of the Adult Daily value of preformed vitamin A, zinc, and/or niacin in a single serving (Table 6). Among the most-fortified snack bars are well-known brands such as Balance Bars, Kind bars and Marathon bars. (See Table A2 in Appendix A for a full listing.)

Table 6: Fortification levels in a sample of 1,025 energy and snack bars*

|

Fortified nutrient |

25-45% of the adult Daily Value |

50% of the adult Daily Value |

100% of the adult Daily Value |

|---|---|---|---|

|

Preformed vitamin A** |

71 products |

17 products |

-- |

|

Zinc |

71 products |

2 products |

-- |

|

Niacin |

73 products |

5 products |

8 products |

*. The majority of snack and nutrition bars reviewed by EWG represent 2013 product formulations. EWG conducted a detailed review of package label information for 161 products identified in FoodEssentials’ database as having 25 percent or higher adult Daily Value for preformed vitamin A, zinc or niacin in a single serving. For these 161 products, the labels were gathered between Dec. 11, 2012 and March 6, 2014. Overall, 143 labels were from 2013; 17 labels were from 2014; and 5 labels were from 2012.

** Includes only products with preformed vitamin A as retinyl palmitate or retinyl acetate. Excludes products containing only vitamin A from natural sources or carotenoids such as beta-carotene.

Women who might be pregnant should not take high doses of vitamin A supplements and should be cautious with foods and personal care products that contain high amounts (BfR 2005; BfR 2014; Kloosterman 2007; Yourick 2008). Pregnant women need to be especially careful about their vitamin A intake, and snack bars that contain 50 percent of the Adult Daily Value for preformed vitamin A can be an unsuspected source. The nutritional needs of pregnant and lactating women are unique. Optimal nutrition is essential for growth and development of the fetus and the newborn, but ingesting preformed vitamin A in amounts above the Institute of Medicine’s Tolerable Upper Level can cause serious congenital birth defects such as malformations of the eye, skull, lungs and heart.

The Institute of Medicine set the Tolerable Upper Intake Levels for pregnancy at 3,000 μg of preformed vitamin A per day. Some fortified foods, including snack bars and cereals, have 50 percent of the vitamin A adult Daily Value, added in the form of preformed Vitamin A such as retinyl palmitate or retinyl acetate. A single serving would provide 750 μg of preformed vitamin A. Four servings, a realistic diet scenario, would reach the IOM limit. Other little-known sources of exposure during pregnancy are cosmetics, personal care products and sunscreens, some of which contain significant amounts of vitamin A (BfR 2014; Yourick 2008).

For women who take daily vitamin A-containing supplements and eat a diet that includes milk and meat products, consuming foods with large amounts of preformed vitamin A and using personal care products containing vitamin A can lead to routine intakes that might be risky. Women who take daily vitamin pills should monitor their consumption of foods fortified with vitamin A (Penniston 2003).

Harmful Effects of Excess Vitamins and Minerals

Adequate vitamin and mineral intake from a balanced diet is essential for maintaining health and preventing diseases caused by dietary deficiencies, such as pellagra (triggered by a shortage of niacin) or night blindness in children who lack vitamin A. But at too high a level some nutrients can be toxic. Ingesting too much vitamin A during pregnancy can cause severe developmental abnormalities in the fetus, for example. Excessive zinc can suppress rather than stimulate the immune system. Large doses of niacin can produce symptoms that range from nausea and blurred vision to liver toxicity.

A number of vitamins and minerals have been tested in clinical trials to investigate whether taking large amounts could prevent cancer and other diseases. Generally, excessive vitamin levels had no preventive effect and in some cases were associated with increased cancer deaths. The research highlights the importance of sufficient but not excessive intake of vitamins and minerals.

Vitamin A

People need adequate vitamin A to maintain normal immune function, eyesight, the reproductive system and many other aspects of health (Health Canada 2010). However, vitamin A deficiency is uncommon in the United States today (ODS 2013a; FDA 2014b), according to both the Office of Dietary Supplements of the National Institutes of Health and the FDA. As the FDA stated, “Vitamin A deficiency based on an assessment of vitamin A status is rare in the U.S. population” (FDA 2014b). In contrast, deficiencies remain a problem in some developing countries where diets do not provide sufficient amounts of vitamin A-rich animal foods. Insufficiency leads to vision problems, such as inability to see in low light or darkness.

“Vitamin A deficiency based on an assessment of vitamin A status is rare in the U.S. population” (FDA 2014b).

A diet that includes five servings a day of carotenoid-rich fruits and vegetables as well as milk and meat products generally provides enough vitamin A without food fortification or supplementation (IOM 2001). Even in the U.S., however, some groups may not eat a sufficiently varied diet. American teenagers are a prime example; fewer than half get adequate vitamin A (Berner 2014).

With widespread vitamin A-fortified food and increasing use of dietary supplements, however, many Americans, especially younger children, have the opposite problem: consuming more vitamin A than the Institute of Medicine considers safe (IOM 2001; IOM 2003; IOM 2005; ODS 2013a).

Numerous case studies have shown the risks of excessive intake of vitamin A for infants, toddlers and children. Infants getting very high amounts can develop intracranial and skeletal abnormalities as well as increased cranial pressure. Among the more common signs of vitamin A toxicity are brittle nails, hair loss, fever, headaches and weight loss (IOM 2001). At high doses, vitamin A is also toxic to the liver, the body’s main storage site for vitamin A.

Due to the particular risks of vitamin A to young children, the German Federal Institute for Risk Assessment and the Dutch National Institute for Public Health and the Environment have recommended against vitamin A fortification of foods in general (BfR 2005; Kloosterman 2007).

High intakes of preformed vitamin A may also be a health risk for older adults, particularly post-menopausal women at risk of osteoporosis and hip fractures (Tanumihardjo 2013; UK EVM 2003). Preformed vitamin A compounds, whether naturally occurring in foods such as liver or taken as dietary supplements, have been shown to alter bone metabolism and lead to bone loss and osteoporosis in humans and laboratory animals (Penniston 2006; Walker 1982).

Five large-scale population studies in the United States and Sweden found that high dietary intake of vitamin A decreased bone density and increased the risk of hip fracture in both older women and men (Feskanich 2002; Lim 2004; Melhus 1998; Michaëlsson 2003; Promislow 2002). These studies prompted the United Kingdom Expert Group on Vitamins and Minerals, an independent committee that advises the British government’s Food Standards Agency, to set a Guidance Level for vitamin A in adults of 1,500 μg preformed vitamin A per day, half the Tolerable Upper Intake Level for adults set by the U.S. Institute of Medicine (UK EVM 2003). Eating just two snack bars with 50 percent of the adult Daily Value of preformed vitamin A would reach this level.

In the long-running, prospective Nurses’ Health Study of 72,337 postmenopausal women aged 34 to 77 years, those who ingested 2,000 micrograms or more of preformed vitamin A a day had nearly twice the rate of hip fractures of those who took less than 500 micrograms a day. Ingesting beta-carotene, a naturally occurring vitamin A precursor, did not contribute significantly to fracture risk (Feskanich 2002).

In animal studies, high dietary intake of vitamin A has been shown to decrease bone mass and lead to bone thinning and spontaneous fractures. Retinoic acid, the active form of vitamin A, inhibits bone formation (Lind 2013). Getting enough calcium and vitamin D is important for bone health, but vitamin A counteracts the positive effects of vitamin D. (Johansson and Melhus 2001).

Zinc

Zinc is involved in many aspects of cellular metabolism and normal growth and development. It also plays a role in the immune system. Severe zinc deficiency in malnourished people can suppress immune function and wound healing. Overt zinc deficiency is uncommon in North America, according to the National Institutes of Health’s Office of Dietary Supplements, but low levels can occur in vulnerable populations, such as people with gastrointestinal or sickle cell disease (ODS 2013b).

Zinc taken within 24 hours of developing a common cold, as in zinc lozenges, can shorten the duration of cold symptoms, even though it may be associated with unpleasant symptoms such as nausea (Science 2012; Singh 2013). But in healthy adults, routinely adding supplemental zinc beyond the amounts in the food supply has few, if any, long-term benefits for immunity (Hodkinson 2007). One 2011 study of healthy 7-to-13-year-old children found that eating breakfast cereal fortified with 25 mg of zinc per 100 gram serving (four times the recommended dietary allowance for 9-to-13-year-olds) had no influence on immune function (Nieman 2011).

Although no adverse effects have been found from consuming naturally occurring zinc in food, excessive supplementation has been shown to suppress immune function. That’s because zinc interferes with copper absorption, leading to copper deficiency, anemia, changes in red and white blood cells and lowered immunity (IOM 2001). In clinical studies, high zinc intake has been associated with a significant increase in hospitalization for genitourinary causes (ODS 2013b).

Because of such concerns, the German Federal Institute for Risk Assessment has recommended against fortifying food with zinc (BfR 2006).

Meanwhile, the zinc intake of US children has been increasing over the past two decades (Arsenault 2003; Butte 2010). In 2003, that led researchers at the University of California, Davis to warn that, “if zinc intake continues to increase because of the greater availability of fortified foods in the US food supply, the amount of zinc consumed by children may become excessive” (Arsenault 2003). Two years later, the Institute of Medicine said high intake of fortified zinc was a growing concern for young children (IOM 2005).

Today, 72 percent of 1-to-3-year-old children get too much zinc from diet and supplements (Butte 2010). The problem is particularly acute in families participating in the federal Women, Infants and Children (WIC) nutrition program and those in the lowest income category, because these groups often eat diets with limited fresh food and more processed, fortified foods (IOM 2005).

Niacin

Niacin plays a role in a variety of metabolic reactions and is necessary for the activity of many enzymes. Niacin deficiency results in a disease called pellagra that affects skin, the digestive tract and the nervous system. The disease was common in the United States and parts of Europe in the early 20th century in areas where corn, a cereal low in both niacin and the amino acid tryptophan, was a dietary staple (IOM 1998b), but today it has virtually disappeared in the developed world. Alcoholism is currently the main cause of niacin deficiency in the United States, according to the University of Maryland Medical Center (UMM 2013).

Excess niacin can lead to flushing reactions, tingling, itching and reddening of the skin, rashes and nausea. High doses can cause liver toxicity, with symptoms such as jaundice, glucose intolerance and blurred vision (IOM 1998).

In February of this year (2014), a case of niacin toxicity was reported after a shipment of enriched rice was apparently accidentally over-fortified by the manufacturer, Mars Foodservices. The company recalled its Uncle Ben’s Infused Rice products after students and teachers in some Texas public schools experienced burning, itching rashes, headaches and nausea 30-to-90 minutes after eating the rice. Similar cases have occurred in Illinois and North Dakota (FDA 2014d). Mars Foodservices acknowledged in a statement that the illnesses might have been related to high levels of niacin in its enriched rice (Sun 2014).

Vitamin supplementation trials underscore potential risks

Recent experiments with vitamin supplementation have also illustrated the dangers of excessive vitamin intake and shown that supplements are generally ineffective for preventing deaths or disease due to major chronic diseases (Fortmann 2013; Guallar 2013; U.S. Preventive Services Task Force 2014).

In several clinical trials, participants who took elevated amounts of β-carotene, vitamin E and vitamin A actually had a higher risk of cancer and higher mortality than a control group (Omenn 2007). One study, the beta-Carotene and Retinol Efficacy Trial, tested the effect of daily doses of β-carotene (30 mg) and retinyl palmitate (25,000 IU) on cancer incidence in 18,314 participants at high risk of lung cancer because of a history of smoking or asbestos exposure. This multi-center trial, sponsored by the Fred Hutchinson Cancer Research Center and the National Cancer Institute, began in 1985 and was halted in January 1996, 21 months ahead of schedule. It was stopped because of a clear link between vitamin intake and cancer incidence and mortality (ClinicalTrials.gov 2012). Participants who took the vitamins had a 28 percent higher lung cancer incidence, 17 percent more deaths overall and a higher rate of heart disease deaths than those who took a placebo (Goodman 2004).

Similarly, the Selenium and Vitamin E Cancer Prevention Trial, conducted by the National Cancer Institute at 427 participating sites across the United States, Canada, and Puerto Rico, found no beneficial effect of selenium supplementation on prostate cancer risk, and instead found a 17 percent increased risk from taking vitamin E (Kristal 2014). In this study of 1,739 men with diagnosed prostate cancer, participants took 200 micrograms of selenium and 400 IU of vitamin E daily, either separately or in combination. This trial was also stopped once the data revealed there was no protective benefit for selenium and an increased risk of prostate cancer among those taking vitamin E. The researchers concluded that men should avoid selenium or vitamin E supplementation at doses above the recommended dietary intakes.

Even high exposure to folic acid, which is legally required to be added to flour and flour products in the United States to prevent fetal developmental defects, has been linked to unanticipated risks such as childhood allergy and asthma and elevated cancer risk (Brown 2014; Castillo 2012). In a study in Norway, where folic acid is not added to food, treatment with folic acid plus vitamin B12 was associated with higher cancer rates and all-cause mortality in patients with ischemic heart disease (Ebbing 2009).

In Australia, a study found that consuming synthetic folic acid was statistically associated with colon polyps in older men (Lucock 2011). A March 2014 study by the Roswell Park Cancer Institute in Rochester, N.Y., found an increased risk of breast cancer among European-American women who ingested the most synthetic folate from fortified foods. In contrast, eating foods naturally high in folate was associated with lower breast cancer risk (Gong 2014). In animal studies, folic acid has accelerated progression of mammary tumors (Deghan Manshadi 2014).

In summary, a variety of studies designed to investigate whether high vitamin doses could prevent disease have demonstrated that excessive intake can instead be harmful. These findings lend urgency to the need to protect young children from excessive vitamin and mineral supplementation.

How Much Is Too Much?

For 1-to-3-year-olds, a single serving of food containing 20 percent of the adult Daily Value of vitamin A, zinc and niacin provides the full recommended dietary allowances of vitamin A and zinc and two-thirds of the recommended niacin intake. Avoiding excessive intakes is particularly important for children younger than 4 because their tolerable intake limits are low, reflecting their lower body weight.

For 4-to-8-year-old children, a single serving of cereal containing 20 percent of the adult Daily Value of vitamin A, zinc or niacin provides 50-to-75 percent of the recommended daily dietary allowance (Table 7). The Institute of Medicine defines Recommended Dietary Allowance as the amount that provides the nutrient needs of 97-to-98 percent of healthy individuals in a particular age group.

Table 7: One serving with 20 percent of the adult Daily Value (DV) of certain nutrients supplies half or more of the Recommended Dietary Allowance (RDA) for 4-to-8-year-old children and the complete or nearly complete RDA for 1-to-3-year olds

|

Fortified nutrient |

Amount in one serving containing 20% of the adult DV |

RDA for 1-to-3-year olds |

Percentage of RDA for a |

RDA for |

Percentage of RDA for a 4-to-8-year-old from a single serving with 20% of the adult DV |

|---|---|---|---|---|---|

|

Vitamin A* |

1,000 IU (300 mg RAE) |

300 mg/d RAE |

100% |

400 mg/d RAE |

75% |

|

Zinc |

3 mg |

3 mg/ day |

100% |

5 mg/ day |

60% |

|

Niacin |

4 mg |

6 mg/ day |

67% |

8 mg/ day |

50% |

* The current FDA daily value for vitamin A is expressed in the outdated form of international units (IU). 1,000 IU correspond to 300 mg (micrograms) of retinol activity equivalents (RAE), a measure for expressing vitamin A activity.

So long as outdated adult Daily Values are used for nutrition labeling, foods fortified with 50-to-100 percent of the adult Daily Value of vitamin A, zinc or niacin in a single serving pose the highest risk of overexposure for children 8 and younger. For pregnant women, foods with 50 percent or more of the adult Daily Value for vitamin A are also a risk, because ingesting excessive vitamin A during pregnancy can cause developmental defects in the fetus.

An additional complicating factor is that some cereals may contain more added vitamins and minerals than the label reflects, a situation that the 2003 Institute of Medicine report highlighted as a potential source of unsafe exposures (IOM 2003; UK EVM 2003). These “overages” occur when manufacturers deliberately add extra nutrient, either to ensure that it will be at the advertised level throughout the product’s shelf life or to compensate for expected breakdown of unstable vitamins (Ottaway 2008).

Overages vary depending on the stability of the added ingredient. Unstable nutrients, such as vitamin A, generally require high overages (Wirakartakusumah 1998). Larger amounts are also sometimes added accidentally, which can cause illness (FDA 2014d). In 2001 Metabolife International recalled 1.5 million Diet & Energy Bars because they contained excessive amounts of vitamin A (Associated Press 2001), and in 2014 Mars Foodservices recalled the Uncle Ben’s Infused Rice products that were accidentally fortified with too much niacin (Sun 2014).

Studies in the U.S., the European Union and New Zealand have found that the actual level of fortified nutrients can be as much as double the amount on the label (Samaniego-Vaesken 2010; Thomson 2005; Thomson 2006; Thomson 2007; Whittaker 2001). A Dutch study published this year (2014) identified one fortified food that contained four times the amount of vitamin A listed on the label (Brandon 2014). In cereals that claim to provide 50-to-100 percent of the adult Daily Value, these “overages” can pose a significant health concern for young children, pregnant women and older adults.

Flawed and Outdated Daily Values, Industry Marketing, Put Children at Risk.

FDA’s Daily Values are outdated

Most consumers are familiar with the Nutrition Facts panel detailing the nutritional content of packaged foods. Most consumers are also familiar with the percent Daily Value numbers the panels listed for many nutrients. What most consumers don’t realize, however, is that these Daily Values were calculated only for adults, or that they were set in the 1960s and have never been updated.

The FDA’s goal at that time was to help consumers avoid nutritional deficiencies. Over the last 50 years, however, such deficiencies have become uncommon, and the opposite problem has emerged. Today, the FDA’s Daily Values exceed the Tolerable Upper Intake Levels for children 8 and younger calculated by the federal Institute of Medicine (Table 8). (For a complete analysis, see Appendix C).

Table 8: FDA’s current adult Daily Values for vitamin A, zinc and niacin exceed IOM’s Tolerable Upper Intake levels for children 8 and younger

|

Fortified nutrient |

FDA Daily Value for adults and children 4 or older |

The IOM Tolerable Upper Intake Level for 4-to-8-year-olds |

FDA Daily Value for children less than 4 years |

The IOM Tolerable Upper Intake Level for 1-to-3-year-olds |

|---|---|---|---|---|

|

Vitamin A* |

5,000 IU (1500 mg RAE) |

900 mg/d RAE |

2,500 IU (750 mg RAE/d) |

600 mg/d RAE |

|

Zinc |

15 mg/day |

12 mg/d |

8 mg/day |

7 mg/d |

|

Niacin |

20 mg/day |

15 mg/d |

9 mg/day |

10 mg/d |

* Current FDA Daily Value for vitamin A is expressed in the outdated form of international units (IU). 5,000 IU corresponds to 1,500 μg (microgram) of Retinol Activity Equivalents (RAE).

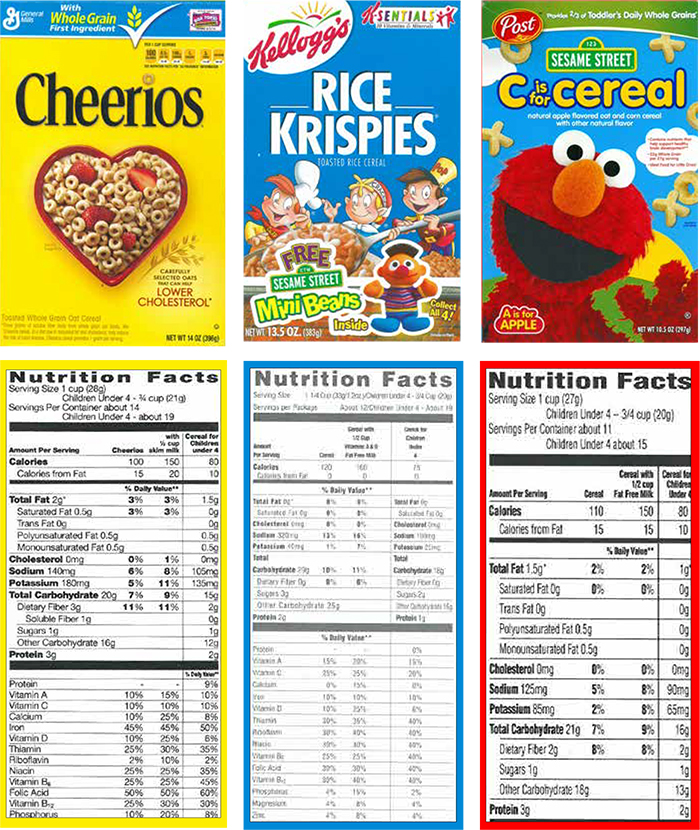

FIGURE 1: Examples of 3 cereals that have displayed Daily Values for adults and children

Source: This sample Nutrition Facts panel, rendered by EWG, is based on the Nutrition Facts from Post C is for Cereal.

There are two major reasons that children are consuming excessive fortified nutrients in food. The first is that food manufacturers discovered that adding nutrients and putting health claims on packaging sells products, which has made voluntary fortification of certain types of foods ubiquitous. The second reason is simply that the FDA’s outdated dietary Daily Values on Nutrition Facts labels are based on adult dietary needs. They were set in 1968 – more than 40 years ago – when the primary concerns were still nutritional deficiencies (NRC 1968). The current disconnect between current Daily Values for nutrition labeling and children’s actual nutrition needs puts millions of American children at risk of excessive exposure to vitamin A, zinc and niacin.

The Daily Values for nutrition labeling, used on a vast majority of products, are defined by the FDA as “reference values, based on a 2,000 calorie intake, for adults and children 4 and more years of age.” The FDA also publishes a table with Daily Values for infants, children younger than four and pregnant and lactating women, but these are almost never used on product labels (FDA 2007).

The current FDA Daily Value for adults and children 4 and older is 2.5-to-3.75 times the recommended dietary allowance for the 4-to-8-year-old group for vitamin A, zinc, and niacin. The FDA Daily Values for children less than 4 years are 1.5-to-2.7 times the Institute of Medicine’s recommended dietary allowances.

The FDA tried to update its Daily Values in 1991-1992 in the process of implementing the Nutrition Labeling and Education Act of 1990 (IOM 2010). At the time, the FDA proposed to reset the Daily Values to the IOM’s Recommended Dietary Allowances, which would have brought the Daily Values on nutrition labels in line with then-current science. However, on Oct. 6, 1992, under heavy lobbying by the vitamin and supplement manufacturers, Congress passed the Dietary Supplement Act of 1992 that instructed the FDA not to promulgate for at least one year any regulations based upon updated Recommended Dietary Allowances (IOM 2010).

As a result, the window of opportunity provided by the law’s implementation was lost and the 1968 Daily Values remained the basis of the Nutrition Label. In the absence of political will, FDA has never implemented any of the Institute of Medicine recommendations, particularly recommendations on protecting children from excessive exposure to fortified vitamins and minerals (IOM 2003; IOM 2005). The FDA’s fortification policy dates back to 1980 and has not been revised based on new science, even as fortification of food products has expanded significantly (Dwyer 2014; FDA 1980; Yamini 2012).

Despite the evidence of potential risks, manufacturers of vitamins and fortified food continue to advocate for fortification. Publications by manufacturers and industry-supported scientists overemphasize the nutritional deficiencies of some groups and downplay the reality that young children today are at greater risk from excessive intake from fortified foods and dietary supplements (CRN 2014; McBurney 2013; Murphy 2013; Yates 2006).

FDA’s proposed changes to nutrition labels must go further

In order to protect children from the risks of excessive intake of fortified nutrients, it is urgent to update the dietary values used for nutrition labeling to reflect the latest science. It is also important for Nutrition Facts labels to list the actual amounts of micronutrients added, rather than only the percent Daily Values, since dietary needs vary significantly by age and gender. A single set of dietary values cannot address this diversity. Products specifically developed for children should be required to list age-specific Daily Values.

In March 2014, the FDA proposed revisions to the Nutrition Facts label (FDA 2014b). These revisions are a good start but are insufficient to protect children’s health from exposure to excessive fortified nutrients. Under the proposed rules, the Daily Value for nutrition labeling for 1-to-3-year-old children would be set at the Institute of Medicine’s Recommended Dietary Allowance for this age. This is an important step forward, and EWG strongly supports FDA’s decision to update the Daily Values for 1-to-3-year-olds based on the Institute’s recommendations.

In contrast, the FDA’s proposed Daily Values are inappropriate for 4-to-8-year-old children. The proposed vitamin A Daily Value for adults and children 4 or older is the same as the Tolerable Upper Intake Level for 4-to-8-year old children. The proposed Daily Value for niacin is higher than the Tolerable Upper Intake Level for this age group (Table 9).

Table 9: Proposed FDA Daily Values do not protect 4-to-8-year-olds

Fortified nutrient |

Tolerable Upper Intake Level for 4-to-8-year-olds |

Current FDA Daily Value for adults and children 4 or older |

Proposed FDA Daily Value for adults and children 4 older |

|---|---|---|---|

|

Vitamin A |

900 mg/day RAE |

1,500 mg/day RAE |

900 mg/day RAE |

|

Zinc |

12 mg/day |

15 mg/day |

11 mg/day |

|

Niacin |

15 mg/day |

20 mg/day |

16 mg/day |

FDA. Food Labeling: Revision of the Nutrition and Supplement Facts Labels. Fed. Reg. Vol 79, No. 41, 11879 -11987, March 3, 2014.

The Daily Values used for nutrition labeling must be age-specific for 1-to-3-year-olds and 4-to-8-year-olds. Children 4-to-8-years-old cannot be grouped with adults, as is currently the case. They eat a different diet than adults do; their bodies are smaller; their vitamin and mineral needs are different; and their tolerance for excessive intake of vitamins and minerals is much lower. Combining 4-to-8-year-olds with adults for the purpose of nutrition labeling makes no scientific sense and leads to potentially harmful over-exposures to fortified vitamins and minerals.

For 17 vitamins and minerals, the FDA’s recently proposed Daily Values are at least twice the Recommended Dietary Allowances (RDA) for 4-to-8-year-old children. This is clearly problematic. (See Appendix C for a detailed analysis.)

EWG’s review of 1,556 cereals found that the vast majority of Nutrition Facts labels reflect only the adult Daily Values, including on products clearly marketed to children. A few products do list daily values for children younger than four. For example, Post Foods Sesame Street C is for Cereal and some General Mills’ Cheerios products (original formulation) list both adult and daily values on the label and display Nutrition Facts based on FDA’s Daily Values for children under four (General Mills 2014; Post Foods 2013). EWG also found a Kellogg’s Sesame Street Rice Krispies cereal from late 1990s that listed daily values for children under 4. Such practices are entirely voluntary, however, and are rare.

FIGURE 2: Examples of 3 cereals that have displayed Daily Values for adults and children

Source: Products purchased by EWG. General Mills Cheerios cereal was bought in Washington, DC in April 2014. Post C is for Cereal and Kellogg’s Rice Krispies were bought online, as cereal boxes. While the original purchase date for these two products is not available, the dates stamped on the box indicate that Post C for Cereal is a current formulation (expiration date Jan 22 2014) and Kellogg’s Rice Krispies is a formulation from 1990s (expiration date Feb 05 2001).

The FDA has also proposed to change the portion sizes listed on nutrition labels for some foods and beverages to more accurately reflect the amounts that Americans, including children, actually eat (FDA 2014c). For cereals, however, the agency did not propose any changes for serving sizes. FDA’s own data show that the average American eats 30 percent more than the labeled serving sizes for the most popular category of cold cereals, medium-density cereals weighing between 20 and 43 grams. The FDA’s reference serving size amount for these cereals is 30 grams, but its analysis of food consumption data from the 2003-2008 National Health and Nutrition Examination Survey (NHANES) showed that the

medium amount eaten is actually 39 grams. The 30 percent difference between the amount actually eaten and the reference serving size exceeds FDA’s 25 percent bar for updating serving sizes (FDA 2014c).

Given that many American children eat more cereal at a sitting than the unrealistically small serving sizes listed on labels, children are getting even more nutrients than it appears. EWG calls on the FDA to update the cereal serving sizes cited on Nutrition Facts labels to accurately reflect the larger amounts that Americans actually eat.

FDA should curb the use of excessive fortification as a marketing tool

The FDA’s non-enforceable food fortification policy was published in 1980 and has not been updated since, despite the growth of the fortified food market (Dwyer 2014; FDA 1980; Yamini 2012). There is no official guidance in the United States today on how much fortification is safe in foods eaten by various age groups.

With no legal limits for fortification of most products, manufacturers are essentially allowed to add virtually any amount of vitamin A and zinc to breakfast cereals or snacks. And many add much higher amounts of niacin than the FDA requires in enriched grain-based products. The lack of food fortification regulation in the United States contrasts with official policy in other developed countries. For example, Germany’s Federal Institute for Risk Assessment recommends against vitamin A fortification of foods other than margarine and butter spreads and against any food fortification with zinc (BfR 2005; BfR 2006).

In August 2013, the FDA announced that it would study how nutrient content claims affect consumers’ attitudes about food products, saying that the agency “does not encourage the addition of nutrients to certain food products (including sugars or snack foods such as [cookies] candies, and carbonated beverages). The agency said it wants to examine whether “fortification of these foods could cause consumers to believe that substituting fortified snack foods for more nutritious foods would ensure a nutritionally sound diet” (FDA 2013). The newfound concern over this now common practice is welcome, but it is also “too little, too late.”

As a result of unregulated food fortification, heavy industry marketing using added nutrients and associated health claims, and frequent use of dietary supplements, American children 8 and younger are getting too much vitamin A, zinc and niacin, which could lead to negative health effects (Bailey 2012b; Butte 2010; Fulgoni 2011; IOM 2003; IOM 2005). Consuming too much vitamin A from fortified food and supplements could also pose health risks to pregnant women and to older adults.

Some important dietary inadequacies still exist, such as insufficient intake of vitamin A, C, D and E, calcium and magnesium among adults and teenagers (see Appendix B). But with the exception of vitamins D and E and calcium, dietary inadequacies are rare among children 8 and younger.

EWG compared the prevalence of vitamin and mineral insufficiency among Americans with fortification levels in two of the most commonly fortified foods, ready-to-eat breakfast cereals and snack bars. It reveals a clear disconnect between what Americans actually need and the amounts found in the most heavily fortified foods. (See Appendix B)

EWG also identified 20 cereals that contain multiple vitamins and minerals added at 100 percent of the current adult Daily Value in a single serving. (See Table A3 in Appendix A for the full list.) Nineteen feature a claim advertising their vitamin and mineral content. Two cereals use the number “100” in the product name itself (Food Lion Whole Grain 100 and Stop & Shop Source 100). Four products highlight the 100 percent Daily Value per serving for specific nutrients such as vitamin C, vitamin E or iron. Fourteen highlight the presence of multiple fortified vitamins with terms such as “100 percent Daily Value”, “Excellent Source,” “Good Source,” “With/Provides Essential Vitamins and Minerals” or “Antioxidants.”

Such marketing strategies, fully legal under the FDA’s outdated policy, drive excessive fortification and pose a risk of over-exposure for some age groups, particularly children 8 and younger. In its 2003 report on the Guiding Principles for Nutrition Labeling and Fortification, the prestigious Institute of Medicine wrote, “Manufacturers often adjust the quantities of particular ingredients or discretionary fortificants so that their products can be shown in the Nutrition Facts box to have a higher percent DV for some nutrients and a lower percent DV for others.” The report highlighted that some manufacturing practices may result in unnecessary, excessive intake of fortified nutrients. The potential harmful effects are of greatest concern for nutrients for which the Daily Value used on nutrition labels is close to or exceeds the Tolerable Upper Intake Level for young children (IOM 2003).

The concern over excessive fortification of food as a marketing tool is not new. As far back as 1990, the Institute of Medicine’s Committee on the Nutrition Components of Food Labeling wrote that “the use of [Daily Value] percentages creates undesirable incentives for manufacturers to over-fortify foods in order to achieve ‘100 percent of your [or the government’s] requirements’” (IOM 1990).

EWG believes the FDA should update its food fortification policy to bring it in line with current science and with the Institute of Medicine’s recommendations on the necessary and safe intakes of added vitamins and minerals. The agency should also rein in the use of excessive fortification as a marketing tool. So long as food fortification remains at the discretion of manufacturers, EWG recommends that parents give their children 8 and younger foods with not more than 20-to-25 percent of the adult Daily Value for vitamin A, zinc and niacin.

EWG’s Recommendations

For parents

-

Parents should exercise caution with products with more than 20-25 percent of the adult Daily Value for vitamin A, zinc or niacin, and monitor their children’s intake of these and other foods to ensure that kids do not get too much of these nutrients. As long as the outdated adult Daily Values continue to be used for nutrition labeling, parents must be watchful of the ingredients in the foods their children eat, particularly fortified vitamins and minerals, sugar and trans-fats. Under realistic diet scenarios, products with 20-25 percent or less of the adult Daily Value for vitamin A, zinc or niacin are not likely to pose a risk of excessive intake for children. Products that contain more than 20-25 percent of the adult Daily Value may pose a risk, especially if a child also takes a multivitamin.

-

Educate yourself about the different forms of vitamin A. There are several forms of vitamin A. The danger of overexposure applies to preformed vitamin A; it does not apply to products with naturally occurring high levels of carotenoids, which are vitamin A precursors. For example, products that contain carrots or pumpkin, which are naturally high in carotenoids, may have a very high percentage of the vitamin A Daily Value on the nutrition label but are considered safe. Preformed vitamin A can appear on the nutrition label as retinyl palmitate, retinyl acetate, vitamin A palmitate, vitamin A acetate or retinol.

- Read labels carefully to identify what form of vitamin A the product contains and the overall amount added. If the label lists preformed vitamin A at more than 20-25 percent of the adult Daily Value, it could give a child too much vitamin A.

For Policymakers

-

The FDA should finalize its new nutrition label and adjust the adult Daily Values and children’s Daily Values to be in line with current science and the recommendations of the Institute of Medicine.

- The FDA should require the nutrition labels on products marketed for children to display age-specific percent Daily Values, such as for 1-to-3-year-olds and 4-to-8-year-olds.

- The FDA should update the cereal serving sizes cited on Nutrition Facts labels to accurately reflect the larger amounts that Americans actually eat.

- The FDA should modernize its 1980s guidelines on voluntary food supplementation, particularly for products that children eight years old and younger may eat, in order to avoid excessive nutrient exposure from fortified foods.

- The FDA should limit the use of fortification and nutrient claims as marketing tools.

For food manufacturers

- Responsible food manufacturers should avoid over-fortifying products marketed to children.

- Products children eat should contain no more than the recommended daily allowance for each age group and should never exceed the children’s Tolerable Upper Intake Levels determined by the Institute of Medicine.

- For products eaten by both children and adults, food manufacturers should list in the nutrition label age-specific daily values for: 1-to-3-year-olds, 4-to-8-year-olds and adults.

Appendix A: Fortification of Cereals and Snack Bars

Table A1: 114 cereals contain 30 percent or more the adult Daily Value for zinc, niacin and/or vitamin A per serving

|

Breakfast cereals, in alphabetical order within each cereal category |

Zinc % DV |

Niacin % DV |

Vitamin A % DV1 |

|---|---|---|---|

|

Cold cereal |

|||

|

America's Choice Crispy Corn & Rice Hexagons Cereal |

10 |

35 |

25 |

|

America's Choice Crispy Rice Sweetened Rice Cereal |

25 |

30 |

15 |

|

America's Choice Essentially You Crispy Rice & Wheat Flakes with Red Berries |

0 |

35 |

15 |

|

America's Choice Essentially You Crunchy Lightly Sweetened Rice Cereal |

4 |

35 |

15 |

|

America's Choice Healthy Mornings Vanilla Almond Cereal |

0 |

35 |

15 |

|

Cap'n Crunch's Chocolatey Crunch |

30 |

30 |

0 |

|

Cap'n Crunch's Cinnamon Roll Crunch Sweetened Corn & Oat Cereal |

30 |

30 |

0 |

|

Cap'n Crunch's Oops! All Berries Sweetened Corn & Oat Cereal |

35 |

35 |

0 |

|

Clear Value Crisp Rice Cereal |

25 |

30 |

15 |

|

Essential Everyday Bran Flakes Cereal |

100 |

100 |

15 |

|

Essential Everyday Golden Corn Nuggets |

10 |

50 |

15 |

|

Essential Everyday Good Day with Strawberries |

0 |

35 |

15 |

|

Food Club Crisp Rice |

2 |

30 |

25 |

|

Food Club Deluxe Banana Nut Medley |

20 |

30 |

25 |

|

Food Club Deluxe Cranberry Almond Crunch Cereal |

10 |

30 |

20 |

|

Food Club Essential Choice Bran Flakes |

100 |

100 |

25 |

|

Food Club Essential Choice Toasted Rice Flakes |

4 |

35 |

15 |

|

Food Club Essential Choice Vanilla Almond |

0 |

35 |

15 |

|

Food Lion Crispy Hexagons Corn & Rice Cereal |

10 |

35 |

25 |

|

Food Lion Enriched Bran Flakes Cereal |

100 |

100 |

25 |

|

Food Lion Whole Grain 100 Cereal |

100 |

100 |

10 |

|

General Mills Cheerios (single 1.1 oz serving in a plastic container)2 |

30 |

35 |

10 |

|

General Mills Fiber One, Honey Clusters |

50 |

50 |

0 |

|

General Mills Total + Omega-3 Honey Almond Flax |

100 |

100 |

10 |

|

General Mills Total Raisin Bran |

100 |

100 |

10 |

|

General Mills Total Whole Grain |

100 |

100 |

10 |

|

General Mills Wheat Chex |

35 |

25 |

10 |

|

General Mills Wheaties |

50 |

50 |

10 |

|

General Mills Wheaties Fuel |